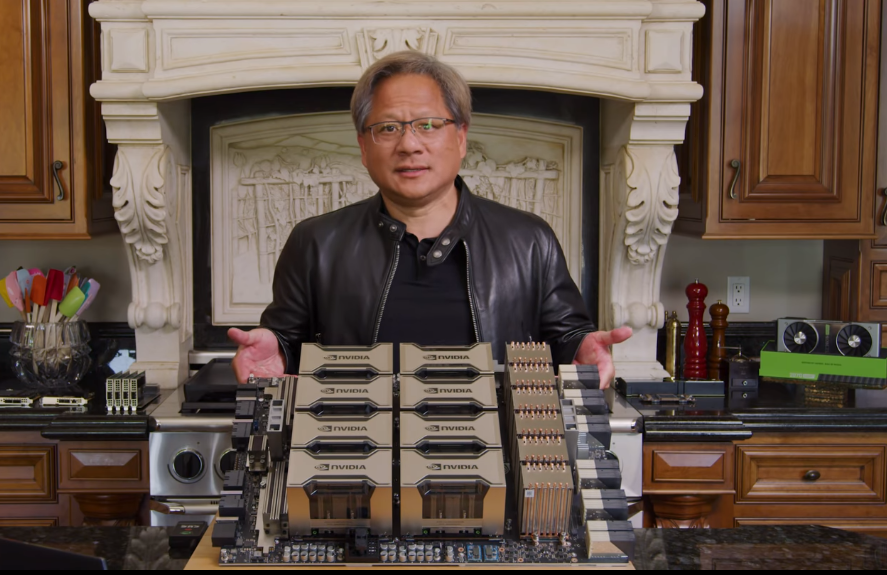

Recently, NVIDIA CEO and Co-Founder Jensen Huang introduced GPU-based NVIDIA A100. Huang made the announcement from the kitchen of his California home along with discussions on important new software technologies such as NVIDIA Jarvis and recent Mellanox acquisition. NVIDIA A100 is said to be the first GPU based on the NVIDIA Ampere architecture with 54 billion transistors.

The NVIDIA A100 Tensor Core GPU is said to deliver unprecedented acceleration at every scale for AI, data analytics, including high-performance computing (HPC) to tackle highly complex processing challenges.

Behind A100

NVIDIA A100 is part of the complete NVIDIA data centre solution that incorporates building blocks across hardware, networking, software, libraries, and optimised AI models and applications from NGC, which is the hub for GPU-optimised software for deep learning, machine learning, and high-performance computing (HPC).

This AI chip is represented as the most powerful end-to-end AI and HPC platform for data centres that allows the researchers to deliver real-world results and deploy solutions into production at scale.

According to a blog post, this chip draws on design breakthroughs in the NVIDIA Ampere architecture — offering the company’s largest leap in performance to date within its eight generations of GPUs — to unify AI training, inference, and boost performance by up to 20x over its predecessors.

Huang stated, “The powerful trends of cloud computing and AI are driving a tectonic shift in data centre designs so that what was once a sea of CPU-only servers is now GPU-accelerated computing.” He added, “NVIDIA A100 GPU is a 20x AI performance leap and an end-to-end machine learning accelerator — from data analytics to training and inference. For the first time, scale-up and scale-out workloads can be accelerated on one platform. NVIDIA A100 will simultaneously boost throughput and drive down the cost of data centres.”

Breakthroughs

The NVIDIA A100 GPU is a technical design breakthrough that is fueled by five key innovations, and the outcome of these innovations result in 6x higher performance than NVIDIA’s previous generation Volta architecture for training and 7x higher performance for inference. The innovations are mentioned below:

- NVIDIA Ampere Architecture: At the core of this chip is the NVIDIA Ampere GPU architecture that contains more than 54 billion transistors and hence, making it the largest 7-nanometer processor in the world.

- Third-generation Tensor Cores with TF32: The A100 includes new TF32 for artificial intelligence that allows for up to 20x the AI performance of FP32 precision, without making any changes in the code.

- Multi-Instance GPU: Multi-instance GPU or MIG is a new technology that allows multiple networks to operate simultaneously on a single A100 GPU for optimal utilisation of computing resources. It enables a single A100 GPU to be partitioned into as many as seven separate GPUs in order to deliver varying degrees of computing for jobs of different sizes while providing optimal utilisation as well as maximising return on investment.

- Third-Generation NVIDIA NVLink: The 3rd-generation NVIDIA NVLink helps in doubling the high-speed connectivity between GPUs, and thus provide efficient performance scaling in a server.

- Structural Sparsity: Structural sparsity is a new technique that harnesses the inherently sparse nature of AI math to double the performance.

Wrapping Up

Among the early adopters of this chip, tech giant Microsoft will be the first one to use the power of NVIDIA A100 GPUs. Mikhail Parakhin, corporate vice president at Microsoft, stated, “We will train dramatically bigger AI models using thousands of NVIDIA’s new generation of A100 GPUs in Azure at scale to push the state-of-the-art on language, speech, vision and multi-modality.”